The Trivy (Aqua) breach wasn’t an LLM incident.

But it exposed a problem that increasingly affects LLM systems too.

Not because attackers need to target models directly, but because LLM infrastructure now sits inside the same build, deployment, and runtime paths that attackers already know how to abuse.

The shift in the supply chain

Supply chain attacks used to be about one thing: getting malicious code into what you run

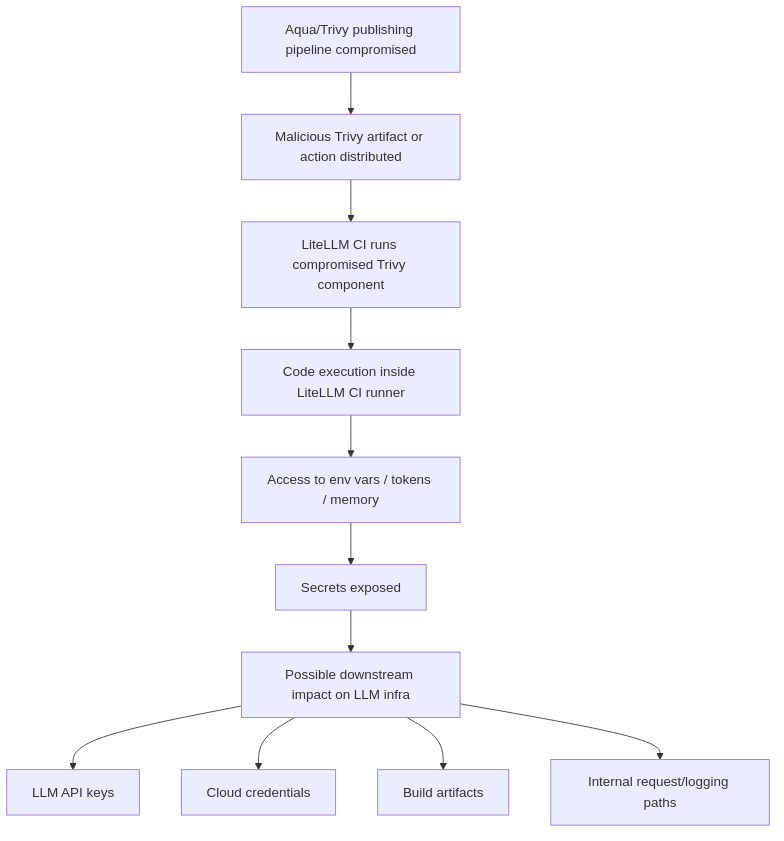

The Trivy incident fits this model well:

- Attacker gains access to CI/CD

- Publishes malicious artifacts

- Downstream systems execute them

But in modern systems, especially LLM-connected ones, the supply chain is no longer just about what gets built. It is also about what gets access to data, requests, and control paths.

Today, the supply chain includes:

- CI/CD pipelines

- Security tooling, like Trivy

- LLM middleware, such as LiteLLM, gateways, and proxies

- Logging and observability systems

- Dataset preparation and evaluation pipelines

And critically, these components don’t just execute code.

They see, process, and move sensitive data.

In the case of LiteLLM specifically, the supply chain doesn’t stop at how it’s built or distributed - it extends to how it routes requests, handles headers, logs data, and integrates with external systems.

LiteLLM effectively becomes part of the execution path between your application and model providers. That means its “supply chain” includes:

- How requests are constructed and forwarded

- How headers (including authorization) are handled or transformed

- How retries, fallbacks, and routing decisions are made

- How logging and tracing capture request/response data

- How plugins, guardrails, or middleware hooks interact with payloads

These are not implementation details in the trivial sense; They define how sensitive data flows through the system.

So when something goes wrong, the impact is not limited to a bad package version or a compromised release artifact. It can affect:

- What data is exposed

- How requests are interpreted

- And ultimately, how the model behaves in production

For LLM middleware, the supply chain doesn’t end at build time. It extends into runtime behavior.

What actually happened in the Trivy breach

At a high level:

- A GitHub Actions misconfiguration enabled token exposure

- Attackers gained access to publishing pipelines

- Malicious versions of Trivy artifacts were released

- Version tags were manipulated

- CI pipelines unknowingly executed attacker-controlled code

Once that code ran inside CI, the trust boundary was already broken.

Where LLM systems come into play

Modern pipelines increasingly interact with LLMs.

It’s now common to see CI jobs that:

- Run prompt evaluations

- Call LLM APIs

- Generate or validate prompts

- Process datasets for fine-tuning

- Run agent workflows for testing

That means secrets like:

- OPENAI_API_KEY

- ANTHROPIC_API_KEY

- cloud credentials

- internal service tokens

are often present inside the CI runtime.

The implication is straightforward:

if malicious code executes inside CI, it can access anything the job can access

Including LLM API keys.

But it doesn’t stop at secrets.

If your pipeline builds, templates, or validates prompts, that same access can be used to subtly manipulate them by inserting hidden instructions, weakening guardrails, or creating conditions that make downstream jailbreaks more likely.

In other words, the attacker is not limited to stealing credentials. They may also be able to shape the conditions under which the model behaves in production.

The attack vector in practice

Once a compromised Trivy component executed inside CI, the attacker had the same access as the job itself.

That includes environment variables, local files, credentials, and any data the pipeline processes during execution.

This is not unique to LLM environments. The difference is that LLM-related pipelines increasingly expose not just secrets, but prompts, datasets, evaluation artifacts, and model-facing configuration.

From upstream compromise to direct downstream victim: LiteLLM

LiteLLM is now being reported as a direct victim in the broader TeamPCP supply-chain campaign, with malicious PyPI releases affecting versions 1.82.7 and 1.82.8. Public reporting has linked that activity to the same broader campaign associated with the Trivy incident.

- Malicious LiteLLM packages were reported on PyPI

- Public reporting and maintainer discussions point to the same broader pattern: LiteLLM was directly affected through malicious PyPI releases, and that activity has been linked to the broader TeamPCP campaign.

- LiteLLM’s own issue tracker reflects emergency discussion around compromised versions 1.82.7 and 1.82.8

Individually, these may look like normal post-incident artifacts.

Taken together, they point to a broader supply-chain reality:

Once an upstream campaign compromises trusted build and release paths, downstream projects can become direct victims through their own package distribution channels, CI environments, or release credentials.

Public reporting links the LiteLLM compromise to the same broader campaign associated with the Trivy incident, but the exact intrusion path and sequencing should still be treated carefully unless confirmed by maintainers or incident responders.

But it does highlight an important dynamic:

- Upstream compromise (Trivy / TeamPCP campaign) - compromise of trusted build, publish, and execution paths

- Direct downstream victimization (LiteLLM) - malicious package distribution, credential-theft risk, and possible integrity loss across environments that installed the affected versions

Separate from the confirmed malicious-package reporting, smaller integrity anomalies, such as versioning inconsistencies across docs, tags, and package registries, are still worth watching because they can signal release-process drift during or after incident response.

They can indicate:

- build pipeline tampering

- partial or inconsistent releases

- or simply gaps introduced during incident response

In LLM ecosystems, these signals matter more than they used to.

Because the same pipelines now:

- Build code

- Configure AI systems

- And handle sensitive credentials

What becomes possible after the point of infection

The Trivy breach is often framed as a secret theft problem.

But the more important capability was deeper than exfiltration: attacker-controlled code executing inside environments that build, test, and configure LLM-connected systems.

From that point, a range of second-order effects become possible, not because they were observed in this incident, but because the access pattern allows them.

Silent prompt drift

If CI builds or templates prompts, an attacker can introduce subtle changes:

- Hidden instructions

- Reordered system prompts

- Small formatting tweaks that change model behavior

These changes can pass tests but shift outcomes in production.

Dataset shaping

If pipelines prepare evaluation or fine-tuning data, an attacker can:

- Insert targeted examples

- Bias distributions

- Plant trigger phrases

The impact is persistent and hard to attribute back to CI.

Evaluation skew

If CI generates or stores evaluation results, an attacker can:

- Inflate scores

- Suppress failures

- Alter baselines

The result is false confidence in a system whose measured performance no longer reflects reality.

Tooling and agent surface changes

If pipelines define tool schemas or agent configs, an attacker can:

- Modify tool definitions

- Expand permissions

- Introduce new capabilities

Downstream agents may gain unintended access without any obvious change in application code.

Data exposure beyond keys

Beyond API keys, CI often touches:

- Prompts and completions

- Retrieved documents, or RAG context

- Embeddings and traces

An attacker can extract or mirror these quietly, especially if observability is already in place.

Integrity drift across releases

Small inconsistencies, like documentation referencing non-existent package versions, can be a signal of something deeper:

- Mismatched artifacts

- Partial releases

- Or tampering during build and publish steps

Individually, these can look harmless. Over time, they may signal that the system in production no longer cleanly matches the system that was built, tested, or documented.

A new mental model

We used to think about supply chain risk as: can someone inject malicious code into what I run?

We now also need to ask: what trusted components can access my data, influence my requests, or quietly alter system behavior?

The new supply chain reality

The Trivy breach showed how attackers can enter the supply chain.

The LiteLLM signals show how downstream LLM infrastructure can become part of the blast radius, even when the failure mode no longer looks like a classic package compromise.

Together, they point to the same shift: the supply chain is no longer just about code, it’s about access, visibility, and subtle control over systems that handle sensitive data

And in LLM systems, that includes your API keys, your prompts, and your models’ behavior.

Once an attacker is inside your CI, they’re not just stealing your secrets - they’re potentially shaping your AI systems.

.png)

.png)

.png)

.png)

.png)

.png)

.png)

.png)